Legal teams know what they want to automate, but current tools require complex workflow builders that force lawyers to think like engineers.

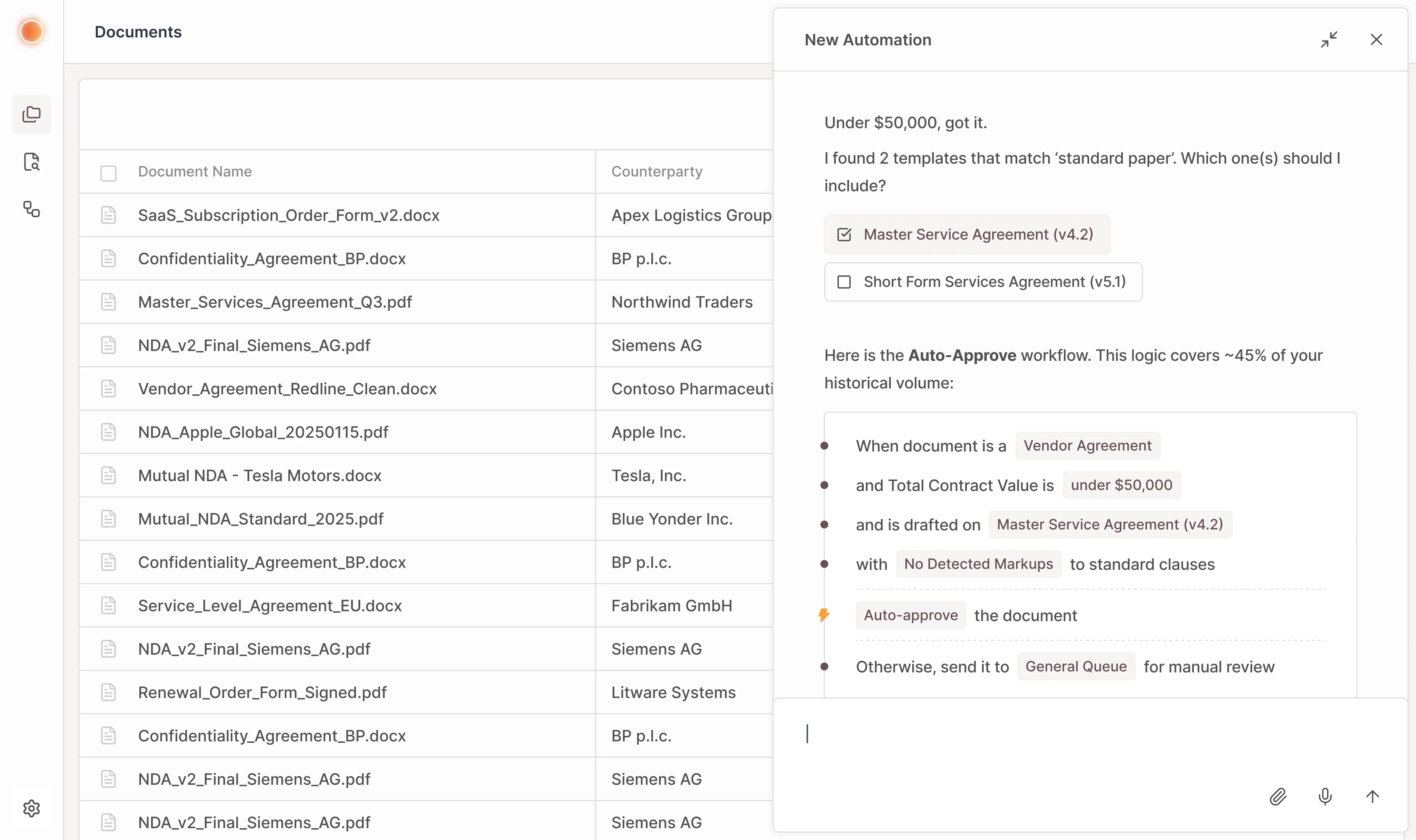

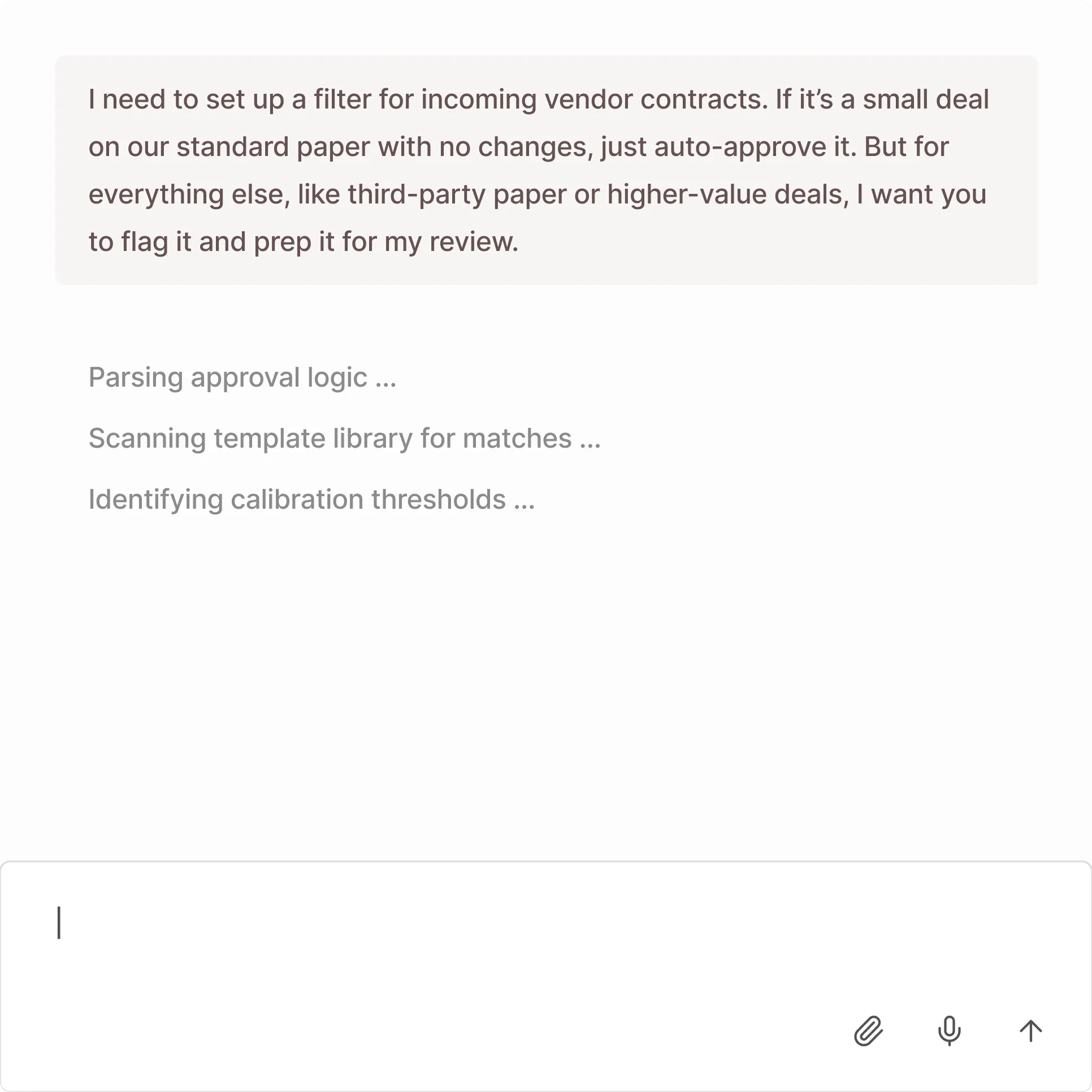

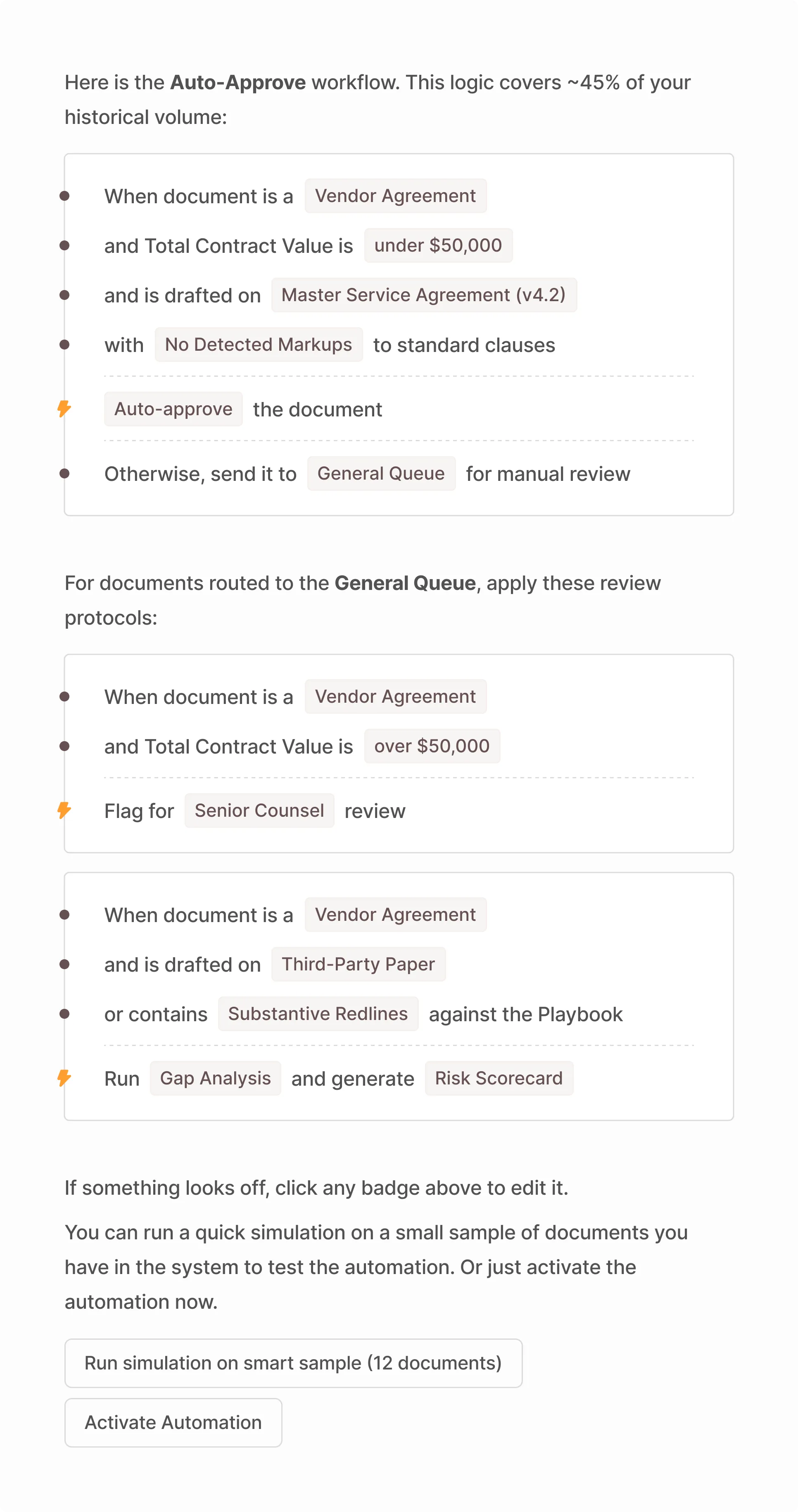

I explored an agentic interface where instead of manually configuring logic, users define intent in plain English for the LLM to translate into rules they can check and test.